How It Works

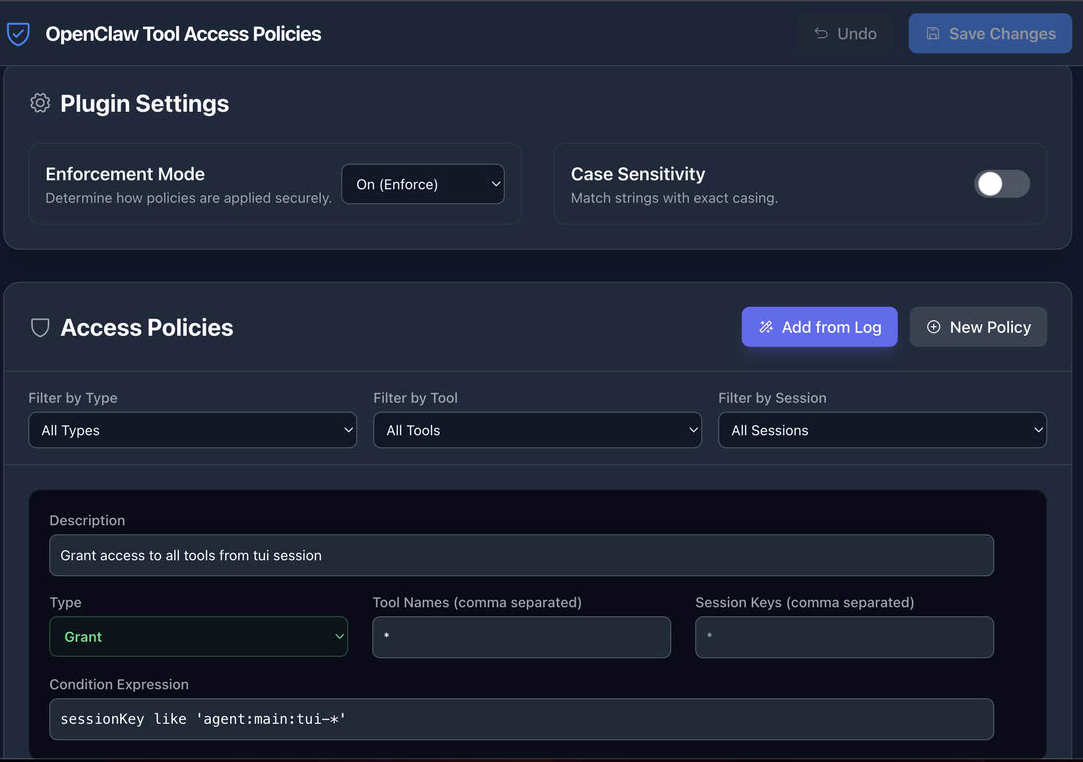

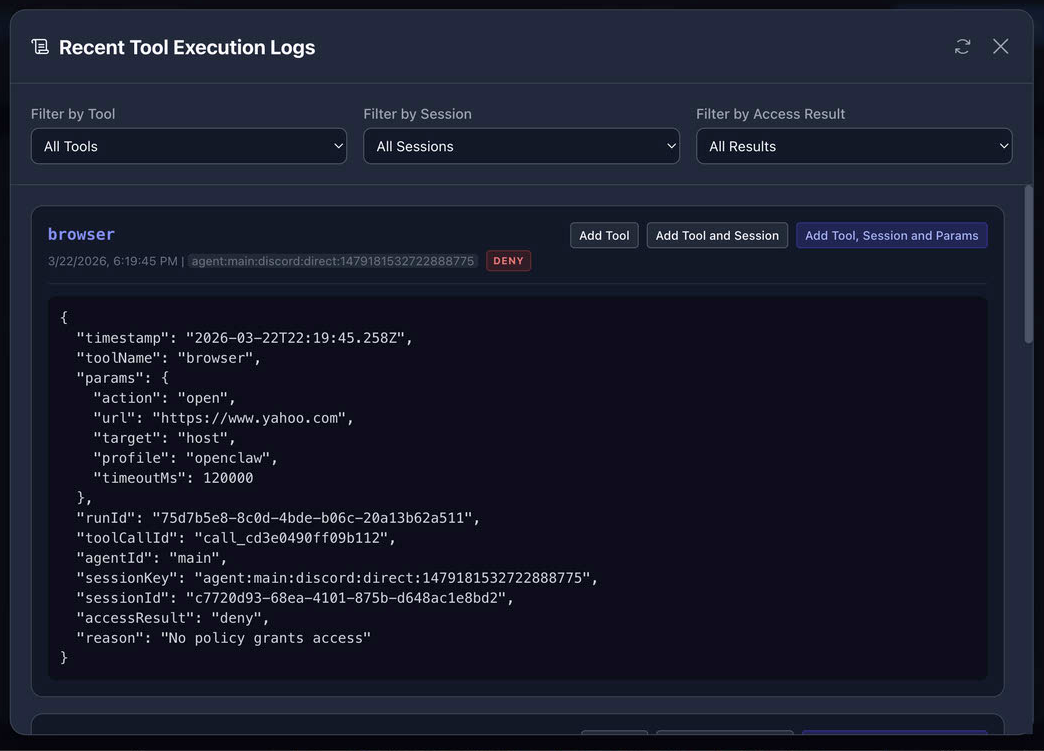

This is where the OpenClaw Fine-Grained Access Control Plugin can help. While no single tool can make an autonomous system perfectly safe on its own, this plugin significantly improves system safety and reliability by shifting the security model. Instead of relying on the LLM to ignore a malicious instruction, the plugin intercepts every tool call at the protocol level and evaluates a fine-grained access policy.

The plugin hooks into the before_tool_call event. Before a tool executes—such as a shell command or a browser action—the plugin evaluates matched policies and makes a deterministic access decision based on the tool name, session/channel, and request parameters.

- Deterministic Decisions: Access decisions based on tool name, session, and parameters.

- Identity-Aware: Uses sessionKey to identify caller identity and channel context.

- Surgical Precision: Authorize or block based on specific parameters, not just binary switches.